In 2020, we created a composite Community Health metric that captures four key dimensions of student engagement and achievement. Along the way, we confirmed something we already knew: instructors who use many of our best practices have Communities that perform significantly better than instructors who use fewer. Over the past 3 years, we’ve continued to gather data and feedback that support this finding.

New instructors using Yellowdig sometimes worry that our recommendations could lead to disaster in their courses, but our data indicate quite the opposite: it is riskier to ignore these practices.

However, we also discovered something new: following our best practices virtually guarantees success! New instructors using Yellowdig sometimes worry that our recommendations could lead to disaster in their courses, but our data indicate quite the opposite: it is riskier to ignore these practices.

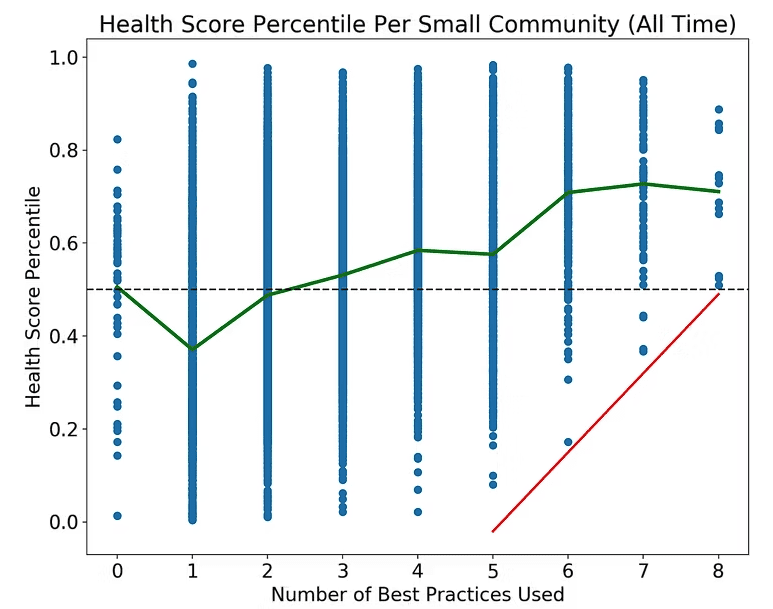

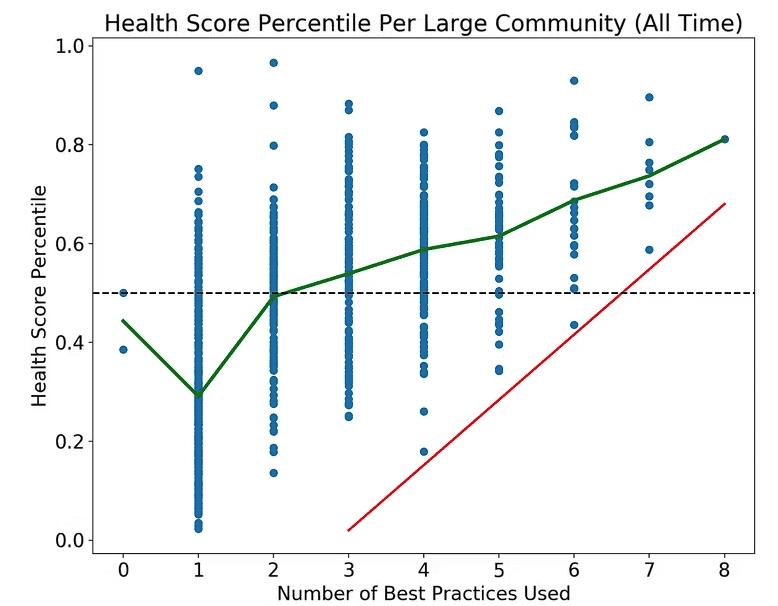

Almost 100% of instructors who used all (or nearly all) of our best practices had online Communities that were well above the 50th percentile on Community Health.

Since we included every Yellowdig Community with at least 10 students and 20 combined Posts and Comments in this analysis (17,843 Communities at over 50 institutions), these outcomes can be expected regardless of institution type, student population, or other pedagogy decisions made by instructors. By contrast, most instructors who used few of our best practices scored below the 50th percentile.

Our other data (e.g., a report we did with ASU) show that Yellowdig activity and measures of Community Health like the conversation ratio (# of Comments per Post) are also related to grades and retention. Therefore, while it requires a slight shift in mindset from traditional discussions, aligning your pedagogy with our design and best practices is the least risky and most responsible way to use Yellowdig.

What is Community Health?

Our composite metric was derived from four dimensions of student engagement and achievement, which typify successful Communities:

- Conversation ratio, or the number of Comments divided by the number of Posts. The higher the conversation ratio, the more students are engaging in authentic, back-and-forth conversations with each other.

- Earned proportion of 100% participation goal, or how many points students earned relative to the total participation goal. If this proportion is over 1.0, students earned more points than were required for a perfect Yellowdig grade. Meeting and exceeding the participation goal is a sign that students are more intrinsically motivated to participate. (In other words, they find value in the Community and are choosing to take part.)

- Content per student, or the total number of Posts + Comments per student. The more students write, the more engaged they are, all else being equal.

- Words over minimum, or the average number of words students wrote per Post/Comment in excess of the minimum word requirement for earning points. Like the earned proportion of 100% participation goal, this metric is a sign of intrinsic motivation to participate beyond requirements.

Each of these metrics was converted into a percentile score, and the mean of those percentiles became our Community Health metric.

Our Client Success team prides itself on having data-backed best practices that are open to revision in the face of new data. Creating a new Community Health metric for the Yellowdig Awards presented us with a new opportunity to put our best practices to the test. We plotted the composite Community Health score for every Community in Yellowdig with at least 10 students and 20 combined Posts and Comments.

Is there a relation between a Community’s Health Score and the number of best practices instantiated by the instructor?

The answer is a resounding yes. Even more interesting (and perhaps surprising to those who find some of our suggestions unorthodox) is that instantiating more best practices also increased baseline Community Health. In other words, using our best practices not only increases your likelihood of success (seen in the upward slope of the green line in the graphs below), but also practically eliminates the possibility that your Community will fail (seen through the complete absence of blue dots below the red line in the lower-right quadrant of both graphs).

Using our best practices not only increases your likelihood of success, but also practically eliminates the possibility that your Community will fail.

This effect was even more pronounced in large Communities (def. 100+ board followers) than in small Communities (def. 10 to 100 board followers). We infer this is because professors have a bigger “voice” in small Communities and can therefore impact it more, both positively and negatively. Instructors in small Communities can also employ time-consuming strategies for managing individual student behavior that are unnecessary and become untenable in larger classes. Our data would suggest that these strategies likely backfire as often as they succeed. Regardless, rather than being a gamble, embracing our best practices is by far the safest course of action and will all but guarantee above-average outcomes.

Each blue point represents a Community. The green line cuts through the mean Community Health score for each number of best practices used. On the conventional interpretation of Pearson’s r, the effect size for small Communities was small to moderate (r=0.35, p<0.001, N=16971), whereas the effect size for larger Communities was moderate to strong (r=0.66, p<0.001, N=872). Considering the amount of noise in these data sets due to factors such as student population, institution type (R1’s, small liberal arts, for-profit, etc.), and instructor characteristics, these effect sizes are quite large.

Keep in mind that we restricted this analysis to passive best practices that we can detect looking only at Community metrics derived from our database. Most of these best practices require little-to-no work to implement beyond initial configuration. (Both passive and active best practices are described and defended at length here.) Passive best practices include:

- Enabling the weekly max

- Enabling grade passback

- Enabling points for reactions

- Enabling points for Receiving Comments on Posts

- Enabling points for Accolades and awarding them to 1–20% of Posts

- Setting the weekly reset deadline for any day but Sunday

- Making Comment words worth as many points as Post words

- Enabling nudge notifications

Consider everything we didn’t take into account in our analysis: prompts, modules, assignments, and other content-related decisions that instructors often presume are crucial to good discussions. These decisions certainly matter.

For example, we see that weekly prompts with weekly deadlines have unavoidable detrimental effects that kill good discussions. In general, though, we find that instructors worry too much about getting students to generate a lot of content and think too little about encouraging students, through the point system, to create smaller amounts of interesting content that drive more sustained conversations.

Yellowdig’s point system isn’t just an automated scoring mechanism; it’s a holistic strategy for incentivizing behaviors that spark interesting conversations and build healthy Communities. Once those Communities are built and students see the value in participating, they no longer need strong incentives; they are intrinsically motivated to take part.

If you had told us, during our years as university instructors, that our discussions would almost certainly be better than average if we simply followed some passive best practices and gave our students more freedom, we might have accused you of selling us snake oil. But once you see the data, it’s hard to argue otherwise.

Yellowdig’s point system isn’t just an automated scoring mechanism; it’s a holistic strategy for incentivizing behaviors that spark interesting conversations and build healthy Communities.

The upshot is this: if you want to increase student engagement while mitigating risk, the very first thing you should do is try out all of our best practices. While it is possible to achieve success while ignoring them, success is less likely and is particularly difficult with larger classes. (Note how few blue points there are in the top-left quadrant of the large board graph.) This information is particularly important to consider for instructional designers, administrators of online programs, and anyone who is teaching at scale. For large Communities, the effects of our best practices are stark.

Allowing the point system to change behavior as it was designed to do isn’t lazy or risky. It is an evidence-based, effective way to promote quality peer-to-peer interaction.

Samuel Kampa, Ph.D. is the former Client Success Analyst at Yellowdig. Samuel received his Ph.D. in Philosophy from Fordham University. He taught seven classes and nearly 200 undergraduate students at Fordham. He brings to Yellowdig that teaching experience, an enduring interest in improving pedagogy, and data science training that has helped expand Yellowdig’s data analysis capabilities and improve instructor training materials.